Robots.txt Test: How to Check If Your File Is Working

SEO

Apr 27, 2026

0 min

Your robots.txt file quietly controls how search engines access your website. When it’s working properly, it helps guide crawlers. When it’s misconfigured, it can block important pages from being indexed, without you even noticing.

Running a proper robots.txt test is one of the simplest ways to avoid serious SEO issues.

What Is a Robots.txt File?

A robots.txt file is a simple text file placed in your website’s root directory (e.g., yourdomain.com/robots.txt).

Its role is to tell search engine crawlers:

- Which pages or sections they can access

- Which ones they should ignore

It doesn’t enforce rules, it gives instructions that most search engines follow.

Why Testing Robots.txt Matters

Even a small mistake in your robots.txt file can lead to:

- Important pages not being indexed

- Entire sections of your website being hidden from search

- Confusion for search engine crawlers

This is why running a robots.txt test regularly is important, especially after:

- Website updates

- Redesigns

- URL structure changes

- CMS migrations

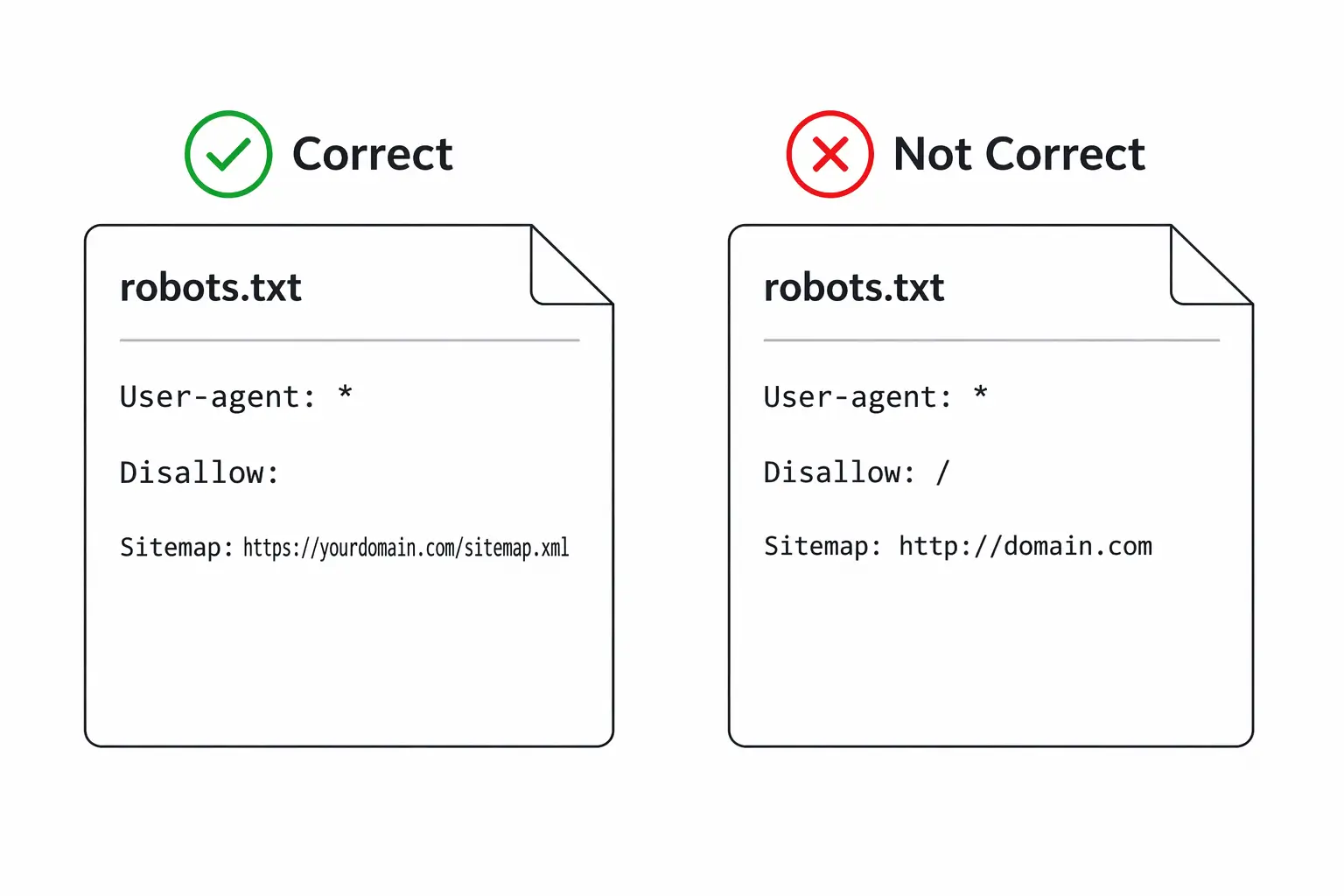

Common Robots.txt Issues

Before testing, it helps to know what can go wrong.

Typical problems include:

- Blocking entire directories by mistake

- Incorrect syntax (missing slashes, wrong formatting)

- Disallowing pages that should be indexed

- Conflicts with sitemap or meta robots tags

These issues are often not visible unless you actively check for them.

How to Check If Robots.txt Is Working

Here are the most practical ways to check if your file is working correctly.

1. Check the File Directly

Open your browser and go to:

yourdomain.com/robots.txt

Make sure:

- The file loads correctly

- There are no obvious errors

- The structure looks clean and intentional

This is a quick first step, but not enough on its own.

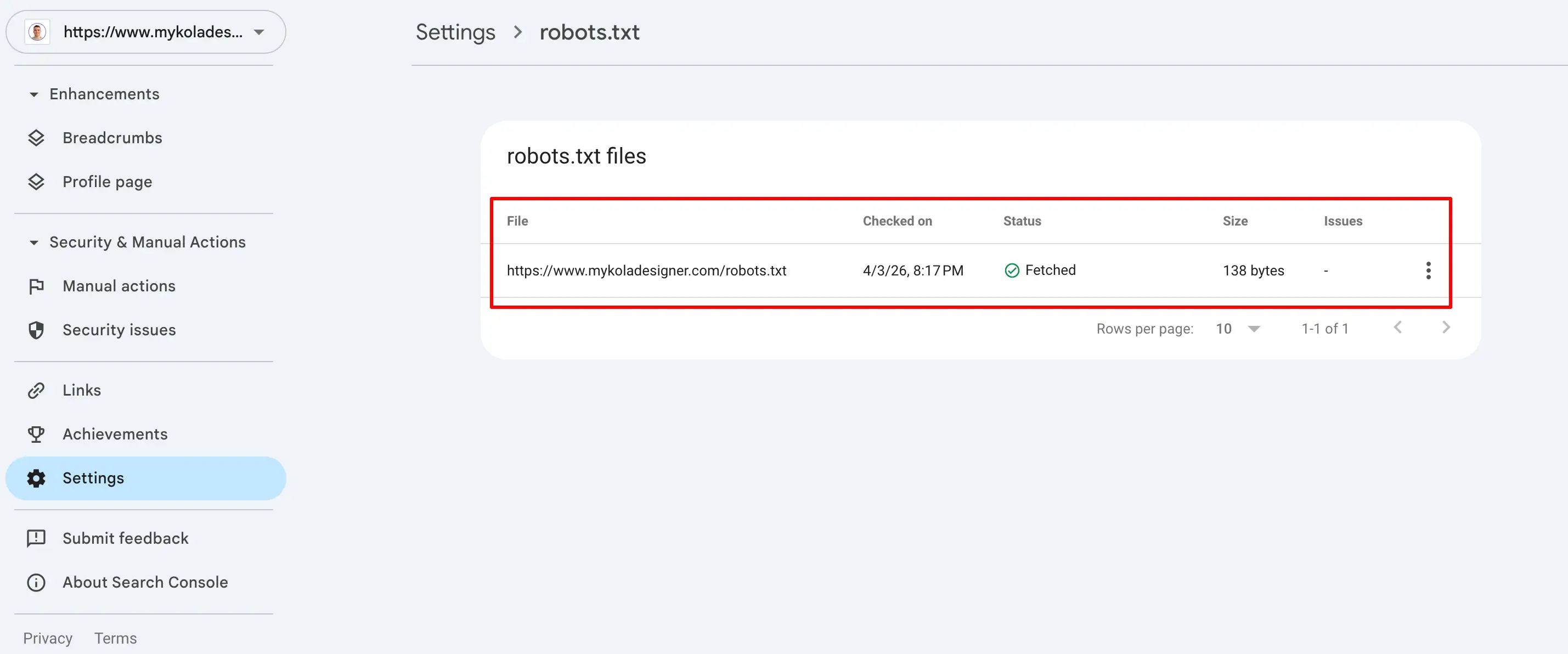

2. Use Google Search Console

One of the most reliable ways to perform a robots.txt test is through Google Search Console.

You can:

- See if your file is accessible

- Identify blocked URLs

- Understand how Google interprets your rules

This gives real insight into how your robots.txt file affects indexing and supports a more accurate robots.txt analysis.

3. Test Specific URLs

Ask:

- Is this page allowed to be crawled?

- Is it accidentally blocked?

You can test URLs individually using tools or by reviewing your robots.txt rules.

Robots.txt example file:

User-agent: *

Disallow: /admin/

This blocks everything inside /admin/.

Small details like this can have big consequences if applied incorrectly.

4. Use SEO Crawling Tools

Tools like:

- Screaming Frog SEO Spider

- Ahrefs

can simulate how search engines crawl your site and show:

- Blocked pages

- Crawl restrictions

- Indexability issues

This is especially useful for larger websites.

How to Know If Your Robots.txt Is Working

If you're wondering how to test robots.txt file, here’s what a correct configuration should include:

- Allow important pages to be crawled

- Block only what needs to stay private or irrelevant

- Not conflict with your SEO strategy

Signs everything is working:

- Key pages are indexed

- No unexpected drops in traffic

- No critical crawl errors

If something feels off, it’s often worth reviewing both robots.txt and overall site structure together.

Common Mistake: Blocking Too Much

One of the most frequent issues is over-blocking.

For example:

User-agent: *

Disallow: /

This stops search engines from crawling your entire website.

It’s sometimes used in staging environments, but if it goes live, it can remove your site from search results entirely.

Robots.txt vs Meta Robots

It’s important not to confuse these two:

- Robots.txt controls crawling

- Meta robots tags control indexing

A page can still appear in search results even if blocked in robots.txt (without full content visibility).

This is why testing both is important.

UX & SEO Insight

From a UX and SEO perspective, robots.txt is not just a technical file, it directly affects visibility.

If search engines are unable to access your content correctly:

- Your pages won’t rank

- Users won’t find your website

- Your design and content won’t matter

This is one of those behind-the-scenes elements that has a direct impact on performance.

Final Thoughts

Running a robots.txt test doesn’t take long, but it can prevent major issues. It’s one of the simplest checks you can do, and one of the easiest to overlook.

If you're unsure whether your robots.txt file is configured correctly or aligned with your website structure, it’s worth reviewing it as part of a broader audit.

I help clients with technical SEO audits, identifying issues like this and fixing them before they impact performance.

Because when search engines can’t access your website properly, everything else becomes harder to fix later.